“Necessity is the mother of invention.” We have all encountered this phrase in our science textbooks or while reading about most scientific innovations. Be it the invention of the cell phone, the internet, or the World Wide Web, each of these has brought a paradigm shift in the world of technology.

While technology is rapidly expanding its horizon, Artificial Intelligence remains the buzzword that every business can’t stop gushing about. Generative AI, a subfield of artificial intelligence, has gained massive momentum post the launch of ChatGPT by OpenAI in 2022. Since then, generative AI use cases have found their way in some of the major industries, including healthcare, banking & finance, gaming, and supply chain, to name a few, marking the beginning of the Gen AI revolution.

But what is Generative AI? Is it only limited to text or image generation, or can it help in other fields as well? In this blog, we will be answering some of the burning questions around Generative AI and generative AI use cases across industries.

Want to implement cutting-edge GenAI solutions in your company? Mindpath offers end-to-end AI Development Services for deploying generative AI applications efficiently.

Introduction to Generative AI

Let’s start with the basics: what is Generative AI? A magic lamp that fulfils all your wishes; well, almost! Generative AI uses a pool of data that includes text, images, code, etc., amongst other data types.

With the help of machine learning models and neural networks, this large sum of data gets analyzed, patterns are discovered, and output is delivered. Generative AI, as it says, is not limited to analyzing the data, but creates new information such as content, images, videos, and even code.

Also Read: Top GenAI Trends

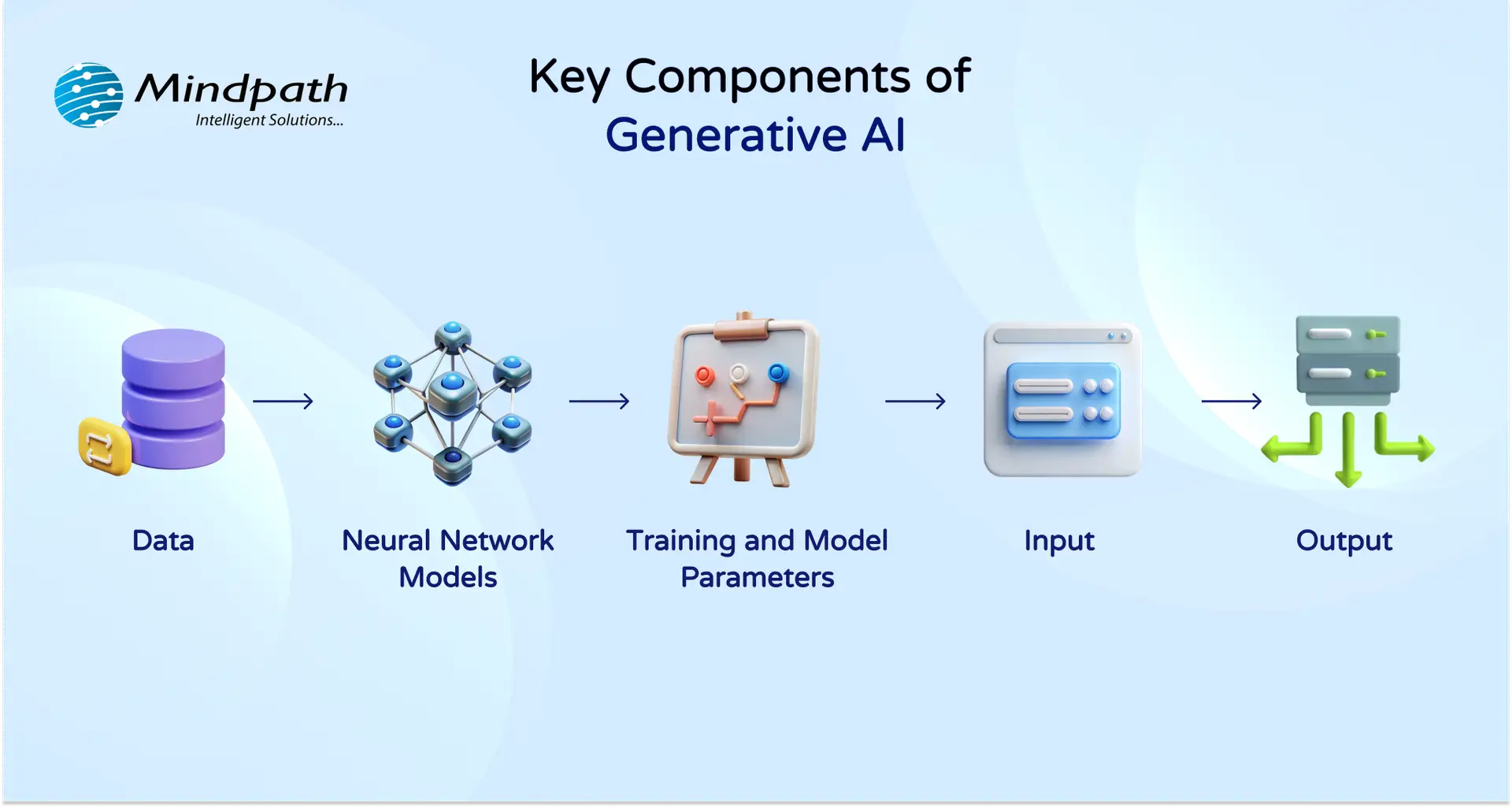

Here are some of the key components of Generative AI:

- Data: Raw data such as documents, eBooks, ledgers, images, videos, codes and a lot more that Generative AI uses to learn.

- Neural Network Models: They work just like a human brain; they help AI models to make sense of all the raw data we have put in the model.

- Training and Model Parameters: There are some parameters that help AI models fine-tune the information, cut the noise and generate accurate output.

- Input: When you ask a Gen AI model to create a cover letter for a job, create a report based on Excel data, generate a Ghibli image to roll with the current trends, that’s categorized as an “input”.

- Output: Whatever results a Gen AI model, such as ChatGPT, MidJourney, Bart, delivers for your query is the output.

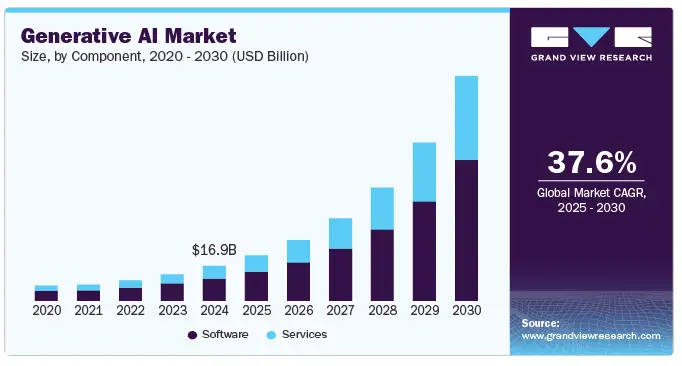

Generative AI is gaining momentum, with a global market valued at USD 37.89 billion in 2025 which is projected to reach USD 1,005.07 billion by 2034 at a CAGR of 44.20%. (Source: Precedence Research)

Generative AI Use Cases Across Industries

While Gen AI is growing, curating a business-first roadmap for its adoption is important for those seeking to leverage it to its fullest advantage. Now that we have explored what Generative AI is, let’s move on to some prominent generative AI use cases in different industries, proving that it’s more versatile than we know!

1. Healthcare

According to a recent survey by Deloitte Center for Health Solutions, 75% of leading healthcare companies across the globe are either currently experimenting with generative AI or planning to scale Gen AI in the near future.

In what area, you may wonder? Generative AI healthcare is being used to maintain patient records, drug discovery, interpreting and analyzing medical images such as X-rays, CT scans, MRIS, to mention a few.

Scientist are using generative AI for modelling molecular structures that can help not just in creating new drug compounds but also in predicting their effectiveness.

92% of healthcare leaders see a promising future of generative AI in improving efficiency as well as speeding the decision-making in critical scenarios.

2. Banking

Next up is the banking and finance industry. Generative AI in banking is fueling the fintech revolution in more ways than one. Generative AI is currently being leveraged for detecting fraud, assessing the credit score or credit risk based on the creditworthiness of an individual or an entity, customer support, automating routine tasks and compliance checks, creating investment strategies, among others.

A recent report by KPMG revealed that around 76% of banking executives in the United States are planning to implement Gen AI for fraud detection and prevention, while 62% want to leverage it to improve their customer service, followed by 68% of professionals that believe Gen AI can be best used for regulatory compliance.

3. Gaming

One of the most exciting areas for generative AI applications could be gaming. According to Statista, over one-third of game developers across the globe are already using Gen AI tools in their studios. PCG, which stands for Procedural Content Generation, is the largest segment of the gaming industry where almost 30% gen AI is being used currently. PCG helps game developers create larger than life game world, environment and universe that intrigues the players and gives them a more immersive experience.

Other than PCG, some other significant generative AI use cases in gaming include developing characters, detecting bugs, generating real-time content like new levels and challenges, and detecting any kind of irregularities or security breaches or threats.

Wondering how AI can help your business to reach the exceptional level of growth? Read our blog to learn about the importance of generative AI for business.

4. Supply Chain

When it comes to adopting Gen AI, the supply chain industry is no stranger to it. In fact, McKinsey’s survey revealed that a third of global businesses are effectively using generative AI in business areas, including operation processes automation (66%), production planning and scheduling (47%), quality control and inspection (44%), and inventory management (43%).

Gen AI can support the supply chain industry with better demand forecasting, optimizing day-to-day operations, assessing and controlling supplier risks, quality control, fraud detection, and much more!

5. Other Industries

Apart from the above-mentioned industries, use cases of generative AI are prevalent in other industries such as sales and marketing, insurance, legal and compliance, human resources, product development, and many more.

While many still utilize gen AI for content, image and code generation, the applications are certainly above and beyond. However, leveraging gen AI comes with its own set of challenges. Being AI-ready is in demand for every industry and business seeking to adapt and leverage AI to its advantage. There’s no one-size-fits-all guidebook or roadmap for AI adoption; rather, each business and industry needs to understand its niche and create a roadmap that generates true value.

Wondering how generative AI is influencing content creation and design strategies? Check out our blog generative AI changing creative industries to see how AI-driven creativity is shaping the future of industries.

Ready to Embrace Innovation with Generative AI?

Generative AI is expanding its horizon, and businesses have started to realize its true potential. While Gen AI continues to grow, it’s critical to assess all of its aspects that involve data security, lack of information, and overdependence.

Businesses looking to adapt AI must look beyond the hype and create a strategy that works for them. At Mindpath, we help businesses create solutions that are based on their real pain points rather than creating just another software. When you talk to us, we attempt to understand your operations and what may work to make them more efficient.

Our customer-first approach helps us identify gaps and curate a solution that works for you. Thus, if you’ve been wanting to adopt AI, the right time is now! Let’s discuss how our AI development services can help you make the best of up-and-coming technologies and get your business future-ready!

Frequently Asked Questions

1. Why are generative AI use cases expanding so quickly across industries?

Generative AI use cases are expanding because businesses need faster innovation, better decision-making, and automation at scale. The technology can process vast datasets and generate meaningful outputs, helping industries respond quickly to market changes while improving efficiency, accuracy, and customer experience.

2. How can companies identify the right generative AI use cases for their industry?

Businesses should start by analyzing operational challenges, repetitive workflows, and data-heavy processes. The most effective generative AI use cases solve real business problems rather than following trends. A clear roadmap, measurable goals, and strong data infrastructure help ensure successful adoption.

3. Is generative AI suitable for regulated industries?

Yes, but with caution. Regulated industries like healthcare and finance must ensure data privacy, compliance, and transparency. Proper governance frameworks, human oversight, and secure infrastructure are essential to safely implement AI solutions without violating regulatory standards.

4. What skills are required to implement generative AI in an organization?

Organizations need a mix of AI engineers, data scientists, domain experts, and cybersecurity professionals. Beyond technical roles, leadership must understand strategy and change management. Training employees to collaborate with AI tools is equally important for long-term success.

5. What risks should businesses consider before scaling generative AI solutions?

Key risks include data bias, security vulnerabilities, inaccurate outputs, and overdependence on automation. Businesses should validate AI-generated results, maintain human supervision, and establish ethical guidelines. A balanced approach ensures innovation without compromising reliability or trust.