Artificial intelligence has come far beyond a futuristic concept for us. Recently, almost every industry has sought the expertise of AI. As per the statistics, the AI market size is going to reach USD 434.42 billion in 2026. Starting from powering personalized recommendations to adopting advanced diagnostics, nothing can beat the accuracy of AI. So, while pre-trained models can offer a strong foundation, fine-tuning is what fuels them to offer business-ready results.

However, fine-tuning is not as easy as a plug-and-play process. You might face unexpected outputs, performance decline, scalability issues due to fine-tuning AI mistakes, and much more. Such mistakes not only waste your time and resources but can also restrict the real potential of AI. This is why we are here to analyze some of the leading fine-tuning AI issues throughout the blog. Further, this guide is curated to answer your real concerns while making you confident about the fine-tuning process.

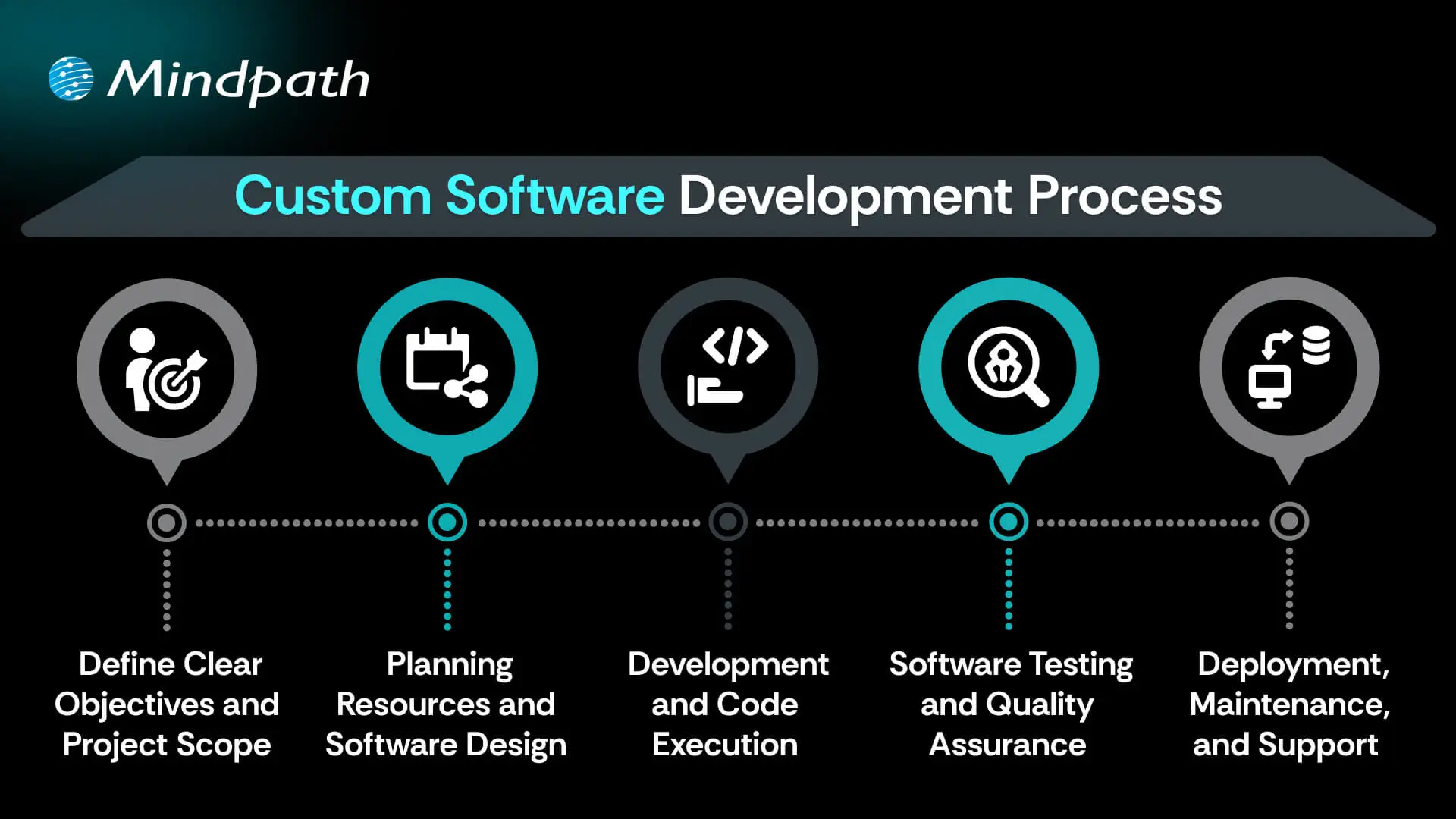

Want to make sure your AI models are fine-tuned for accuracy and effectiveness? Mindpath’s AI development services help you design, optimize, and deploy AI solutions that align with your business goals.

Importance of AI Models Fine-Tuning

Before we highlight the mistakes, it is necessary for you to understand what fine-tuning AI is. So, it is simply a process of adapting a pre-trained AI model to perform better on a specific industry, task, or dataset. Instead of training a model from scratch, fine-tuning allows you to modify your existing model’s parameters. This is how the model can align more closely with your usage scenario.

If you are asking why fine-tuning is essential for your project, it saves immense time while conserving your computational power. Further, training a large model from scratch can take months while draining your pockets intensively. So, fine-tuning becomes a cost-effective alternative.

While improving accuracy for specific tasks, it reduces the chances of generic or irrelevant responses. Fine-tuning AI can enhance the tone of the output while making it consistent. However, if you do it incorrectly, fine-tuning can introduce new issues that can have a negative impact.

Want to understand the foundations behind large AI models before fine-tuning? Discover Large Language Models (LLM) to learn how large language models work and why proper handling is key to avoiding mistakes.

What are Some Evident Tuning Mistakes that You Might Commit?

While fine-tuning leverages a series of advantages, it’s not without its challenges. As AI models evolve and your apps become more specific, you might face new challenges. So, it’s necessary to understand such complexities. This is your ultimate way to build high-performing and robust AI systems that never fail.

1. Fine-Tuning Without Proper Objectives

Many teams consider fine-tuning to be their next logical step that needs to be initiated for AI adoption. However, without a proper goal, you can’t expect the performance to improve and align with your business motives. Moreover, fine-tuning without clarity can keep your performance without any gains. Your models will start behaving inconsistently. This is one of the most overlooked fine-tuning AI mistakes many developers commit.

How to Avoid it?

To avoid such an issue, make sure to clearly define what problem the model must solve before you initiate fine-tuning. Further clarify what is better performance as per your point of view. In order to do this, you should also analyze whether fine-tuning is necessary or if prompt optimization will be enough. Fine-tuning should always be intentional without any experimental guesswork.

2. Overfitting the Model

Overfitting happens when a model becomes too specialized in the fine-tuning dataset and loses its ability to generalize. So, an overfitted model might perform well during the testing period but fails to showcase its prominence in real-world scenarios. It produces rigid outputs and struggles with unseen outputs.

How to Avoid it?

You must use validation and test datasets while applying early stopping techniques. Adjust your learning rates and include diverse examples to keep your dataset matched to every type of real scenario. Moreover, your ultimate goal is adaptability, not memorization. So, this fine-tuning AI approach can be your biggest effort.

Ready to streamline your fine-tuning process with the right technical approach? Discover the OpenAI Fine-Tuning API Tutorial to check out detailed implementation steps and optimization tips.

3. Using Low-Quality Training Data

Many organizations rush with their data collection aspect. They assume more data leads to better outcomes. But, in reality, quality matters more than quantity. Moreover, poor data gives rise to inaccurate data predictions and includes biases. It also increases the rate of hallucinations in language models. This is one of the most damaging fine-tuning AI issues because the model learns exactly what it sees.

How to Avoid it?

Make sure to normalize and clean datasets before initiating the training process. Remove outdated, duplicate, or conflicting samples. Ensure the examples are aligned directly to your target tasks. Quality being the most essential aspect of fine-tuning, you must balance the datasets to keep the outputs streamlined while restricting any unevenness.

4. Underfitting Due to Lack of Proper Training

Some developers lag behind in training or don’t use datasets that are small. This makes the model inefficient. Underfitted models generally showcase minimal improvements over the base models. It fails to capture task-specific orders and offers inconsistent outputs.

How to Avoid it?

It is essential to get a sufficient dataset size as per the complexity of the task. Moreover, monitor training loss and performance trends. Maximize your training potential and be assured of the outcomes. Here, you need to focus on balancing the training efforts to make the results more efficient.

5. Wrong Data Formatting

Many AI models can’t perform efficiently if the input format is not convenient. Formatting mistakes can lead to poor learning signals and incomplete or broken outputs. This is one of the concerning fine-tuning AI mistakes.

How to Avoid it?

It is essential to match tokenizers to the base level and maintain prompt-response structures consistently. Further, validate your data formats before training. Giving foremost attention to technical details can prevent hidden failures.

Facing unexpected roadblocks while implementing AI after fine-tuning? Identify the challenges of AI adoption and discover how to overcome barriers that impact scalability and ROI.

6. Neglecting Evaluation and Real-World Assessment

There are many developers who majorly prioritize training metrics and don’t care much about practical testing. So, without real-world evaluation, your models might fail in production. Your edge cases might remain undiscovered, and the business impact also remains unclear.

How to Avoid it?

It is important to initiate task-centric evaluation metrics. Further, test edge cases and unusual outputs. Most importantly, compare the results with alternative approaches for better results. Proper evaluation ensures fine-tuning AI encourages actual value.

7. Ignoring Safety and Alignment

Fine-tuning can unintentionally weaken safety mechanisms built into pre-trained models. Such issues can lead to biased outputs and unsafe responses. Further, this can put your organization at risk while ruining its reputational values.

How to Avoid it?

The evaluation pipelines must include safety checks, and you should initiate bias audits. If possible, maximize the model’s performance through human review. You must be assured of responsible fine-tuning that keeps the performance result-oriented.

Wondering why your fine-tuned AI model still lacks contextual accuracy? Check out the role of vector database in AI application to discover how semantic search enhances results.

What are Some Effective Strategies to Tackle Fine-Tuning AI Risks?

Your strategies and practices should be effective enough to prevent fine-tuning AI issues. Along with the best practices, you need to be aware of the tools that keep the process successful.

1. Invest in Quality Data and Assessment

Prioritize collecting high-quality data. Further, use intelligent augmentation techniques to expand your limited datasets and increase model generalization.

2. Adopt Parameter-Centric Tuning Approaches

Include methods like LoRA and Adapters to keep the process active. Such techniques can also reduce the computational risks while making the process accessible and cost-effective.

3. Consider Cloud Essentiality

For your scalable storage, cloud platforms can be the most essential factor. You can leverage the use of spot instances to analyze your cloud spend. Further, you can use serverless functions and reserved instances where necessary.

4. Include MLOps Practices

Consider your AI models like software products only. Initiate automated pipelines for development, deployment, testing, and rigorous monitoring. This stabilizes reliability while accessing rapid iteration.

5. Stick to Expert Guidance

Tuning AI issues sometimes requires special knowledge and suitable guidance. So, partnering with professional AI development teams can easily bridge the knowledge gaps. They can also help you accelerate your projects while helping you include cutting-edge solutions efficiently.

Planning to fine-tune models but unsure how they prioritize key data points? Learn about attention mechanism explained to discover how it strengthens language understanding and output quality.

How to Decide if Fine-Tuning AI is the Preferred Approach as Per Your Use Case?

Not every AI issue requires fine-tuning, and adopting it unnecessarily can give rise to complexities and cost. In cases like workflow management and prompt engineering, you can get authentic results without making any changes in the model. So, fine-tuning AI issues is necessary if you demand consistent output behavior at a large scale. You need to assess factors like data availability and performance gaps, and decide whether fine-tuning is necessary for you or not.

Final Words

Fine-tuning has the capability to present domain-centric results. However, it has to be done correctly for valuable outputs. Starting from clear objectives to evaluation gaps, the right strategies and expertise can help you avoid fine-tuning AI mistakes. Organizations that treat fine-tuning as a goal-driven process are more likely to get high-performing and quality AI systems. With data quality and evaluation, you can easily decrease the chances of fine-tuning while keeping the overall outcomes impactful.

This is where professional AI development partners like Mindpath play a crucial role. We can help your organizations design and fine-tune while deploying AI solutions that are practical. Further, we ensure sticking to ethical and future-ready approaches to make your fine-tuning efforts more effective and user-centric. We assist businesses in tackling fine-tuning challenges, whether it’s insufficient data or going through overfitting. Our customized AI development services are designed to align with your objectives while keeping your AI models’ performance consistent and efficient.